‘We should be wary of answering back’

If we actually end up discovering aliens then they’ll probably just wipe us all out, Stephen Hawking has said.

When we made contact with any aliens it would probably be like when the Native Americans first met Christopher Columbus. And, in that case, things “didn’t turn out so well” for the people being visited, Professor Hawking has said.

Stephen Hawking made the warning in a film posted online, Stephen Hawking’s Favorite Places. It showed him taking a spacecraft across the cosmos, visiting different locations across the universe.

One of those places is Gliese 832c, a planet 16 light years away that some have speculated could contain life. But it might not be a good thing if it does, he told viewers.

“The Breakthrough Listen project will scan the nearest million stars for signs of life, but I know just the place to start looking. One day we might receive a signal from a planet like Gliese 832c, but we should be wary of answering back.”

It’s far from the first time that Professor Hawking has warned about the risk of chatting to aliens. When he helped launch the Breakthrough Listen project last year, he warned that any alien that we did actually hear probably wouldn’t be interested in killing us – precisely because it would have barely any interest in us at all.

Any civilisation that could actually read a message we sent out would need to be billions of years ahead of us, he said. “If so they will be vastly more powerful and may not see us as any more valuable than we see bacteria.”

And aliens aren’t the only powerful species that Professor Hawking has warned might kill us just because we are so insignificant that they can’t think to do otherwise.

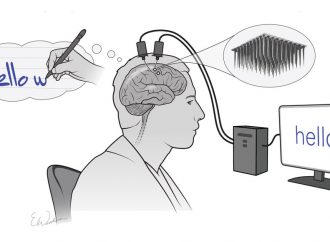

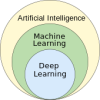

He has also said that artificial intelligence could eventually become so clever that it will accidentally kill us.

“The real risk with AI isn’t malice but competence,” Professor Hawking said last year. “A super intelligent AI will be extremely good at accomplishing its goals, and if those goals aren’t aligned with ours, we’re in trouble.

“You’re probably not an evil ant-hater who steps on ants out of malice, but if you’re in charge of a hydroelectric green energy project and there’s an anthill in the region to be flooded, too bad for the ants. Let’s not place humanity in the position of those ants.”

Source: Independent

Leave a Comment

You must be logged in to post a comment.